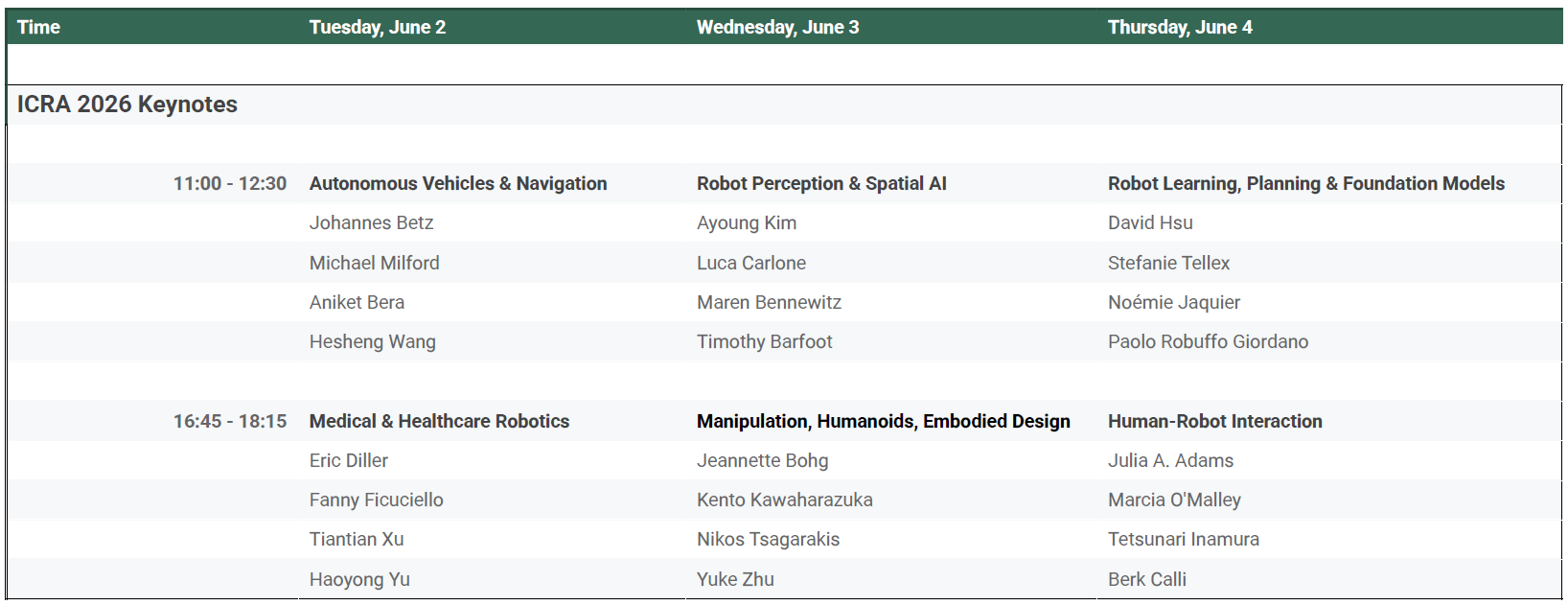

ICRA 2026 Keynote Sessions

Keynote Sessions at a Glance

An overview of the Keynote Sessions can be seen below.

TUESday, 2 June

Title: Autonomous Vehicles & Navigation

Johannes Betz, Technical University of Munich

Title: Learning to Handle Autonomous Vehicles at the Limits – Lessons Learned from Real-World Autonomous Motorsport

Abstract

Can an autonomous car drive faster than a human racecar driver? In this talk, we answer this question by presenting the methods and algorithms that enable an autonomous vehicle to operate at the physical limits of vehicle dynamics. Emphasis is placed on learning-based motion planning control strategies that enable robust decision-making under uncertainty and rapidly changing conditions. We display case studies from autonomous racing competitions, such as the Abu Dhabi Racing League, that illustrate how these approaches push vehicles to their performance boundaries while ensuring safety and reliability.

BIO

Johannes Betz received a bachelor’s and master’s in automotive technology and mechatronics. He received his PhD in 2019 from TU Munich. From 2018-2020 he was a postdoc at the Chair of Automotive Engineering at TU Munich, where he founded the TUM Autonomous Motorsport Team, which successfully participated in international autonomous racing series. From 2020 – 2022 he was a postdoctoral fellow at the University of Pennsylvania, USA, where he worked in the xLab for Safe Autonomous Systems. In 2023, he was appointed as Rudolf Mößbauer Professor at the Technical University of Munich, where he holds the Professorship for Autonomous Vehicle Systems.

Michael Milford, QUT Centre for Robotics

Title: From Neuroscience to Autonomous Vehicle Navigation

Abstract

For roboticists, nature is an amazing inspiration: animals, insects and even humans are capable of amazing feats that are currently far beyond the capabilities of robots. Over two decades, our research group has drawn inspiration from nature’s best navigation systems to create high performance navigation systems for robots like autonomous vehicles. Our inspiration is twofold: we work with neuroscientists who study the neural mechanisms underpinning navigation in the brain, but also look at navigation behaviours, whether it be an ant moving over a sand dune or a Monarch butterfly travelling the globe. It can be a bumpy career journey, especially early in your career, moving from fundamental research inspiration to industry collaboration, working on on-road and off-road autonomous vehicles operating in exotic locations like underground mine sites. We’ve learnt much along the way about how robotics research is performed and evaluated, and the limitations of current benchmarks and metrics for predicting the eventual utility of research that starts in the lab. In this talk, I’ll take the audience on a journey of inspiration, breakthroughs, frustration, and unsolved questions in the quest to develop better robot navigation systems and in doing so, better understand how we ourselves navigate the world.

BIO

Michael Milford, FTSE, conducts interdisciplinary research at the intersection of robotics and neuroscience, specializing in areas like autonomous vehicle positioning. He currently holds appointments as Australian Research Council Laureate Fellow, Director of the QUT Centre for Robotics, QUT Professor, Microsoft Research Faculty Fellow, and Fellow of the Australian Academy of Technology and Engineering. He has led or co-led projects totalling more than 56 million dollars in research and industry funding, received over 18,000 citations, and sixteen best paper awards and finalists. Flagship projects include the Local Positioning System, an initiative to provide a ubiquitous global alternative to GPS, and being part of the ELO2 Consortium selected to deliver Australia’s first Lunar rover. Michael founded Math Thrills, which produces STEM edutainment distributed to over 35 countries. He is a keen mentor at global scale through initiatives like Hacking Academia, for which he won the 2025 Outstanding Mentor of Researchers Eureka Prize.

Aniket Bera, Purdue University

Title: Toward Behaviorally-Intelligent Robots: Safe Navigation in Unstructured and Human-Centered Environments

Abstract

Robots are increasingly expected to operate beyond structured settings and enter dynamic, uncertain, physically unstructured environments shaped by continuous human activity. In such settings, safe navigation is not merely a path-planning problem. It requires robots to perceive complex scenes, reason under uncertainty, anticipate human behavior, and adapt their actions in real time while maintaining reliability and safety. This talk presents a vision for behaviorally intelligent robots: embodied systems that do not simply react to obstacles but instead build computational models of motion, interaction, and risk to navigate effectively in unstructured and human-centered environments. I will discuss recent advances at the intersection of robotics, embodied AI, machine learning, and geometric reasoning that support this goal, including multimodal perception, semantic scene understanding, uncertainty-aware world modeling, human behavior prediction, and adaptive planning.

A central theme of the talk is that progress in robot autonomy will require coupling the expressive power of modern AI with an explicit safety structure. Rich learned representations and semantic reasoning must be integrated with formal constraints, structured decision-making, and physically grounded models so that robot behavior remains adaptive yet dependable when the world is ambiguous. I will argue that the future of safe robot navigation lies in integrated autonomy stacks that connect sensing, interpretation, prediction, planning, and action. Such a shift is essential for building robots that are not only more capable, but also safer, more trustworthy, and more deployable in the real-world environments where they are needed most.

BIO

Prof. Aniket Bera is an Associate Professor in the Department of Computer Science at Purdue University, where he directs the IDEAS Lab (Intelligent Design for Exploration and Augmented Systems). His research focuses on embodied AI and robotics, with an emphasis on how robots perceive, reason, plan, and interact safely in complex, dynamic, and human-centered environments. His group develops methods in safety-critical motion planning, intent-aware and human-aware navigation, multi-agent forecasting, multimodal perception, digital twins, and learning-enabled autonomy for embodied systems. He works closely with federal partners across the Department of Defense, Department of Energy, as well as with major industry collaborators such as Adobe Research, Intel, Disney Research, Eli Lilly, NVIDIA, and others. Dr. Bera serves as a Senior Editor for IEEE Robotics and Automation Letters (RA-L) in Planning & Simulation. His research has received multiple Best Paper awards and finalist honors in robotics and graphics/VR venues, including ICRA, IEEE VR, and ISMAR.

Hesheng Wang, Global College, Shanghai Jiao Tong University

Title: Learning to Navigate: From Scene Understanding to Decision Making

Abstract

Robot navigation spans a range of interconnected problems, from static to dynamic environments, rigid to deformable modeling, reconstruction to generation, and perception to decision making. This talk provides a unified perspective on these components and outlines a forward-looking framework for next-generation navigation systems. We begin with a new paradigm for scene understanding that encompasses semantic mapping and end-to-end reconstruction, where large vision models and learned representations of the physical world enable scalable, high-fidelity, and generalizable scene representation. We then extend to dynamic and partially observable environments, addressing challenges arising from motion and occlusion. We also introduce deformable SLAM to handle non-rigid environments, significantly expanding the scope of navigation. Furthermore, we discuss differentiable rendering and generative modeling as key tools for constructing predictive world representations. Finally, we propose learning-based navigation frameworks that incorporate human-inspired memory and reasoning mechanisms to support long-term, efficient decision-making. Throughout the talk, we emphasize the central role of learning in unifying these components, outlining a path toward embodied intelligence in complex real-world environments.

BIO

Hesheng Wang is Tang Jun Yuan Chair Professor and Dean of Global College, Shanghai Jiao Tong University, Shanghai, China. His current research interests include intelligent robotics, computer vision, and autonomous driving. Dr. Wang is an Editor-in-chief of Robot Learning, an Associate Editor of Robotic Intelligence and Automation, a Senior Editor of the IEEE/ASME Transactions on Mechatronics. He served as an Associate Editor of the IEEE Transactions on Robotics from 2015 to 2019, an IEEE Transactions on Automation Science and Engineering from 2021 to 2023, a Technical Editor of the IEEE/ASME Transactions on Mechatronics from 2020 to 2023, an Editor of Conference Editorial Board (CEB) of IEEE Robotics and Automation Society from 2022 to 2024. He was the General Chair of IEEE/RSJ IROS 2025, IEEE ROBIO 2022, and the Program Chair of the IEEE/ASME AIM 2019.

TUESday, 2 June

Title: Medical & Healthcare Robotics

Eric Diller, Robotics Institute at the University of Toronto

Title: Using Magnetic Fields to Control Tiny Robots in the Gut and Brain

Abstract

Millimeter-scale robots can enter small spaces in the body in a versatile and non-invasive manner, and promise medical applications in surgery, sensing, and intervention. Realizing functional machines at this scale requires a unique approach to robotic power, control, and sensing.

In this talk, I will show how we use multiple tiny magnets embedded within the body of our robots to enable multiple-degree-of-freedom actuation, control and sensing without any physical connection, onboard power or computation. Robotic behaviors such as grasping, cutting, taking of samples, and sensing are all achieved with magnetic fields generated outside the body.

I will show how this approach enables these small-scale robots to operate as functional tools inside the body. These include dexterous surgical tools operating through narrow neuroendoscopic channels in the brain, ingestible capsules for sampling in the gut, and deployable mechanisms to aid in gastrointestinal procedures.

I will show our progress toward clinical applications in the gut and in neurosurgery, and discuss how operating at millimeter scales reshapes the way we design and control robotic systems.

BIO

Eric Diller received the B.S. and M.S. degree in mechanical engineering from Case Western Reserve University in 2010 and the Ph.D. degree in mechanical engineering from Carnegie Mellon University in 2013. He is currently a Professor in the Department of Mechanical and Industrial Engineering and the Robotics Institute at the University of Toronto, where he is director of the Microrobotics Laboratory. His research interests include micro-scale robotics, and features fabrication and control relating to remote actuation of micro-scale devices using magnetic fields, micro-scale robotic manipulation, and smart materials. He is an Associate Editor of the Journal of Micro-Bio Robotics, and received the IEEE Robotics & Automation Society 2020 Early Career Award. He has also received the 2018 Ontario Early Researcher Award, the University of Toronto Innovation Award, and the Canadian Society of Mechanical Engineering’s 2018 I.W. Smith Award for research contributions in medical microrobotics.

Home

Fanny Ficuciello, University of Naples Federico II

Title: From Bioinspired Design to Safe Control: Emerging Challenges in Medical Robotics

Abstract

Healthcare is undergoing a profound transformation, driven by rapid technological advances. The convergence of artificial intelligence and robotics with medicine is opening new frontiers in patient care and treatment. The use of innovative materials and bio-inspired designs will underpin the next generation of robots. On the other hand, the use of soft and deformable structures complicates system modelling and control. Precisely controlling these bodies requires the development of new approaches, thus opening unprecedented challenges and unexplored frontiers in the field of robot control. The challenges are related not only to deformations at contact, but also to the unpredictability of the environment, the difficulty of measuring it, and the safety that must be constantly and significantly guaranteed. Supervised autonomy aims to blend human expert knowledge with the precision of robotic systems. In medical robotics, it represents an intermediate level of automation in which the robot independently performs specific tasks, while the physician acts as a high-level supervisor. The focus is on bioinspired design and control algorithms to achieve supervised autonomy in which humans always maintain significant control over the robot’s behavior. This talk explores fundamental challenges from blue-sky research to prototypes aspiring to be certified and ultimately become products for a rapidly expanding market.

BIO

Fanny Ficuciello is an Associate Professor of Robotics at the University of Naples Federico II, Italy. She directs the SuR Lab (Surgical Robotics Lab) and B2R Lab (Biomimetics and Biohybrid Robotics Lab) of the PRISMA Team at the University of Naples Federico II. Her research focuses on biomechanical design and bio-aware control strategies for anthropomorphic artificial hands, grasping and manipulation, human‒robot interaction, surgical and rehabilitation robotics. She has published more than 200 journal and conference papers and holds four medical device patents. She is an IEEE Senior Member. From 2018 to 2022, she was on the Technology Committee of the European Association of Endoscopic Surgery (EAES). She has served as Associate Editor for Transactions on Robotics, Journal of Intelligent Service Robotics, and Transactions on Medical Robotics and Bionics. Since January 2026, she is Editor-in-Chief of Transactions on Medical Robotics and Bionics.

Tiantian Xu, Shenzhen Institutes of Advanced Technology (SIAT)

Title: Magnetically Actuated Microrobots for Precision Medicine

Abstract

Magnetically actuated microrobots can be remotely and wirelessly controlled via magnetic fields, enabling them to navigate complex and confined spaces that are otherwise inaccessible in the human body. This technology holds great promise for precision biomedical applications. In this talk, the speaker will present a comprehensive research framework spanning from microscale to human-scale systems. He has developed a human-scale magnetic actuation system and corresponding software platform, enabling applications from targeted drug delivery at the micro/nanoscale to remote control of continuum interventional robots at the whole-body scale. A flexible Hall sensor array patch and a two-stage magnetic localization paradigm are proposed to achieve sub-millimeter in vivo localization. Reinforcement learning-based control strategies are introduced to enable high-precision trajectory tracking under ultrasound guidance and in highly disturbed environments. Furthermore, a biohybrid magnetic hydrogel fiber robot constructed from autologous blood is proposed for precise targeting and treatment of glioma. In addition, a magnetically actuated flexible fiber electrode robot is developed for reconfigurable, long-term, multi-channel in vivo electrophysiological monitoring, breaking the traditional paradigm of static implanted electrodes.

BIO

Tiantian Xu is a Professor at the Shenzhen Institutes of Advanced Technology (SIAT), Chinese Academy of Sciences, and a recipient of the NSFC young outstanding scholarship. She has led multiple major research projects, including the NSFC Joint Key Program and the National Key R&D Program (Young Scientists Project). Her research focuses on magnetically driven microrobots for targeted therapy and she has established methods for autonomous path planning and coordinated control of microrobots, developed integrated system platforms, and advanced their biomedical applications. She has published over 30 high-impact IEEE Transactions papers as first or corresponding author (including four in IEEE Transactions on Robotics), and more than 10 papers as corresponding author in leading journals such as Nature (2 papers), Nature Biomedical Engineering, Nature Sensors, and Advanced Materials. She currently serves as an Associate Editor or Editorial Board Member for several leading robotics journals, including IEEE T-RO, T-MECH, T-ASE, and RAL.

https://english.siat.ac.cn/

Haoyong Yu, National University of Singapore (NUS)

Title: Towards Wearable Robotics with better Portability, Safety, and Comfort

Abstract

With the rapid population aging in many developed countries, wearable robotics, commonly known as exoskeleton robots, are believed to have wide applications in both industry and Healthcare. However, the current market size of wearable robotics is still quite small compared with other robotics sectors due to the limitations in portability, safety, and user comfort.

At NUS Biorobotics Lab, we are developing a series of wearable robotics for rehabilitation and worker assistance. We adopt a modular approach based on a set of core technologies and components developed in the lab, which includes compliant actuation, cable drive mechanism, wearable sensors and learning based movement detection algorithms. We achieved better portability with novel differential mechanism design and cable driven transmission, safer human robot interaction with compliant actuation, and better human robot movement synchronization with novel sensing strategies.

In this talk, I will give a brief introduction to some of our wearable robots in real world applications.

BIO

Haoyong Yu is an Associate Professor of the Department of Biomedical Engineering at the National University of Singapore (NUS). He received his bachelor’s degree and master’s Degree from Shanghai Jiao Tong University and his PhD degree from Massachusetts institute of Technology (MIT). He directs the Biorobotics Lab at NUS. His current research interests include biomedical robotics and devices, rehabilitation engineering and assistive technology, service robots, human robot interaction, intelligent control, and machine learning. He have published more than 250 journal papers in robotics and control areas and have applied for more than 20 international patents. His team has developed series of wearable robotics for medical and industrial applications. Prof. Yu is currently the Senior Editor of IEEE Robotics and Automation Letters.

Wednesday, 3 June

Title: Robot Perception & Spatial AI

Ayoung Kim, Seoul National University (SNU)

Title: The Underdog Sensors: Are Robots Using Thermal and Radar Right?

Abstract

Thermal cameras and radar are routinely dismissed in robotics as noisy, low-contrast, and hard to integrate. But the real bottleneck is methodology, not the sensors. This talk addresses thermal infrared head-on: we show that sensor-appropriate calibration, proper 14-bit tone mapping, and VLM-driven RGB-to-thermal translation collectively close the gap that has kept thermal out of mainstream perception pipelines. We then turn to radar, where direct Doppler velocity measurement offers underexploited advantages for state estimation far beyond what sparse point clouds suggest. Together, these two sensors represent an underutilized frontier worth revisiting.

BIO

Ayoung Kim is a Professor in the Department of Mechanical Engineering at Seoul National University (SNU), where she leads the Robust Perception for Mobile Robotics (RPM) Lab. Her research focuses on perceptual robotics, SLAM, state estimation, and spatial representation learning across diverse sensor modalities, including LiDAR, radar, thermal infrared, and vision. She is a co-editor of the forthcoming SLAM Handbook, a comprehensive reference for the field. She received her B.S. and M.S. in Mechanical Engineering from SNU, and her M.S. in Electrical Engineering and Ph.D. in Mechanical Engineering from the University of Michigan, Ann Arbor. Before joining SNU in 2021, she was a professor at KAIST (2014-2021), and has held visiting positions at the Oxford Robotics Institute and the Boston Dynamics AI Institute. Within the IEEE Robotics and Automation Society, she currently serves as Senior Editor of IEEE Robotics and Automation Letters (RA-L) and has previously served as Associate Editor of IEEE Transactions on Robotics (T-RO). She is a recipient of the 2019 Korea Robotics Society Young Researcher Award and the 2016 Young Scientist recognition from the World Economic Forum, and is an IEEE RAS Distinguished Lecturer (2026-2028).

https://rpm.snu.ac.kr/

Luca Carlone, Massachusetts Institute of Technology (MIT)

Title: Maps, Memory, and Tasks: Toward Spatial AI for the Next Generation of Robots

Abstract

Robotics is at an inflection point. For decades, we have built systems that reconstruct the world with increasing geometric precision—yet true autonomy requires more than maps. In this talk, I will highlight three emerging directions that are reshaping how robots perceive, remember, and act. First, I will discuss geometric foundation models—transformer-based, feedforward approaches to 3D reconstruction—that challenge the dominance of classical SLAM. Using a geometric vision perspective, I will outline the fundamental limits of these methods and show how these insights lead to more accurate and scalable systems. Second, I will move beyond mapping to memory: how robots can build persistent, human-like representations of their environments. I will present recent progress on language-enabled memory systems that allow robots to store, retrieve, and reason about information far beyond geometry. Finally, I will argue that both perception and memory must be task-driven. In complex, long-horizon settings, intelligence depends not on storing everything, but on attending to what matters. I will discuss emerging approaches that integrate tasks directly into perception and memory. Together, these trends point toward a new paradigm: spatial AI systems that move beyond mapping to provide actionable understanding and memory of the environment—enabling robust, long-horizon autonomy in the real world.

BIO

Luca Carlone is an Associate Professor in the Department of Aeronautics and Astronautics at the Massachusetts Institute of Technology (MIT), a Principal Investigator in the Laboratory for Information and Decision Systems (LIDS), and an Amazon Scholar. His research interests lie in estimation, learning, and optimization for perception and decision-making in single- and multi-robot systems. His work includes seminal contributions to certifiably correct perception algorithms, 3D scene graphs and Spatial AI, visual-inertial navigation, and multi-robot mapping. He is the recipient of the 2024 Outstanding Systems Paper Award at RSS, the 2022 and 2017 IEEE Transactions on Robotics King-Sun Fu Memorial Best Paper Award, the Best Student Paper Award at IROS 2021, and the Best Paper Award in Robot Vision at ICRA 2020, among others. He has also received the NSF CAREER Award, the RSS Early Career Award, the Google Daydream Award, two Amazon Research Awards, and the MIT AeroAstro Vickie Kerrebrock Faculty Award. He is an IEEE Senior Member, an AIAA Associate Fellow, and a Sloan and Kavli Fellow.

Maren Bennewitz, University of Bonn

Title: Advancing Service Robots Through Active Perception: Mapping and Object Search Under Occlusion

Abstract

Active perception enables service robots to intelligently gather information about their environment by choosing promising view points and performing targeted manipulation actions to cover the scene and remove occlusions. This allows robots to enhance their understanding of the world, adapt to scene changes, build 3D models, and locate target objects. In this talk, I will present active perception strategies for household and agricultural scenarios. First, I will explore methods that enable robots to efficiently perceive objects in cluttered environments and will focus on mapping confined spaces such as shelves or narrow crop rows, in which severe occlusions limit visibility. To address these challenges, robots must not only plan informative viewpoints, but also reason about the scene structure and manipulate objects to reduce occlusions. In addition, I will discuss the use of temporal priors for active perception, such that observations over time are leveraged to enable more efficient perception. Overall, these advancements in active perception substantially improve a robot’s ability to act in complex settings. By enhancing environmental understanding, occlusion handling, and object search, the presented methods enable service robots to better assist people with challenging tasks.

BIO

Maren Bennewitz is professor for Computer Science at the University of Bonn. She heads the Humanoid Robots Lab and is additionally with the Lamarr Institute for Machine Learning and Artificial Intelligence. She is also a member of the executive board of the Cluster of Excellence PhenoRob – Robotics and Phenotyping for Sustainable Crop Production, founding member as well as steering committee member of the Center for Robotics, and vice rector for digitalization of University of Bonn. She explores novel ways to integrate robots into human environments and contributes to innovative research projects, combining AI and robotics for sustainable agriculture, cultural heritage, and personalized robot service. Her group has introduced several novel methods for active perception, 3D mapping, and planning of navigation and manipulation actions in cluttered and dynamic environments.

Timothy Barfoot, University of Toronto

Title: Why Field Robotics Research Still Matters

Abstract

Roughly speaking, field robotics aims to tackle dull, dirty, and dangerous jobs in mining, agriculture, environmental monitoring, defence, industrial mobility, transportation, space exploration, and so on. What makes field robotics hard is that the operating environment can be extreme in terms of weather, lighting, and terrain; unstructured and unknown; and constraining in terms of mass, power, compute, and communications. In a word: messy.

Today, all eyes are on artificial intelligence and its hopeful transformation of robotics into ‘physical AI’. We have seen learning-based (i.e., data-driven) methods outperform traditional techniques in a variety of messy tasks particularly perception, natural language processing (e.g., LLMs), and even advanced control (e.g., RL). Given the messiness that we encounter in field robotics, we are already seeing AI helping with key aspects and this trend is likely to continue.

So what does this mean for academics working in field robotics? Does field robotics research still matter? For me the answer to this question is a resounding yes. Carrying out research motivated by and alongside end users of robotics is still critical to problem understanding, ideation, technology transfer, team building, and of course training. I will use this talk to argue my case through examples gathered over my last 20 years spent as a field roboticist.

BIO

Timothy Barfoot is a Full Professor at the University of Toronto where he currently serves as the Director of the Robotics Institute. Tim works on autonomy for mobile robots aimed at applications including planetary rovers, defence, and mining. He has participated in field tests in some of Canada’s most remote places including the Arctic in both summer and winter. Prior to UofT, he worked at MDA Space, during 2017-9 he was Director of Autonomous Systems at Apple, and he currently works parttime as a Distinguished Engineer at Oxa Autonomy. He is a Fellow of the IEEE and has received three paper awards at ICRA (2010, 2021, 2025). Tim is the current Editor in Chief of the IEEE Transactions on Field Robotics, author of the book State Estimation for Robotics (Cambridge 2017, 2024), and co-editor/co-author of the SLAM Handbook (Cambridge 2026).

Wednesday, 3 June

Title: Manipulation, Humanoids, Embodied Design

Jeannette Bohg, Computer Science at Stanford University

Title: Do We Still Need Dexterous Hands?

Abstract

With increasingly capable grippers and large-scale imitation learning, the case for dexterous manipulation is worth remaking. I will argue that multi-fingered hands remain essential, not as a technical curiosity, but as the foundation of versatile, high-throughput manipulation that grippers fundamentally cannot deliver. Recent advances in hardware and sim-to-real RL have brought us to a point where a single policy can perform zero-shot tool use across diverse objects and tasks without teleoperation or task-specific engineering. Our work SimToolReal represents the first demonstration of zero-shot dexterous tool use at this scale in the real world, yet the large majority of failures trace not to the motor policy but to the object tracker it depends on. In-the-wild human video, properly retargeted to bridge the embodiment gap, provides a strong signal for closing this gap. Our work Masquerade, which learns from human video to train robot manipulation policies, shows substantial gains in out-of-distribution generalization. A key question shaping how we use this data is the distinction between learning from object state trajectories, which captures what task success requires, and learning from hand motion, which encodes functional priors about tool affordances that are difficult to recover from reward alone. I will outline the open questions at the intersection of these two threads: how to distill RL policies into vision-based controllers, and whether human demonstrations can guide dexterous exploration in ways that pure reinforcement learning cannot.

BIO

Jeannette Bohg is an Associate Professor of Computer Science at Stanford University, where she leads research at the intersection of robotics, machine learning, and computer vision with a focus on autonomous manipulation. Her lab aims to uncover the principles of robust sensorimotor coordination and implement them on real robots. Before joining Stanford, she was a group leader in the Autonomous Motion Department at the Max Planck Institute for Intelligent Systems. She earned her Ph.D. from KTH Royal Institute of Technology in Stockholm, where her thesis introduced novel methods for multi-modal scene understanding in robotic grasping. She also holds an M.Sc. from Chalmers University of Technology and a Diploma in Computer Science from the Technical University of Dresden. Her work has been recognized with multiple Best Paper and Early Career awards, including the 2019 IEEE RAS Early Career Award, the 2020 RSS Early Career Award, and the 2023 Sloan Research Fellowship.

Kento Kawaharazuka, Next Generation AI Research Center, The University of Tokyo

Title: At the Intersection of Biology and Machines: From Musculoskeletal to Wire-driven Robots

Abstract

Recent advances in robotics have enabled a wide range of designs that span biological inspiration and machine-oriented principles. In this keynote, I present our research on exploring this design space at the intersection of biology and machines, focusing on systems ranging from musculoskeletal humanoids to wire-driven robots. We have developed a series of musculoskeletal humanoids that replicate key aspects of human anatomy, including tendon-driven actuation and distributed compliance, enabling flexible and adaptive motions. In parallel, we have investigated wire-driven robotic systems that extend these principles beyond human morphology, allowing for simplified structures, robustness in harsh environments, and scalable system configurations. Through these platforms, I discuss how different design choices in actuation, structure, and control influence motion generation, interaction, and system performance. The talk highlights practical insights gained from building and operating these robots, including trade-offs between biological fidelity and engineering efficiency. By presenting these systems along a continuum from musculoskeletal to wire-driven designs, this keynote aims to provide a concrete perspective on how robot design can be approached across the boundary between biology and machines.

BIO

Kento Kawaharazuka is a Lecturer at the Next Generation AI Research Center, The University of Tokyo, Japan, where he also serves in the Department of Mechano-Informatics. He received his B.E., M.S., and Ph.D. in Information Science and Technology from The University of Tokyo in 2017, 2019, and 2022, respectively, and became a Project Assistant Professor in 2022. He leads research on musculoskeletal humanoid robots, integrating bio-inspired mechanical design, wire-driven actuation systems, and learning-based control. His work aims to bridge embodied intelligence and foundation models, spanning hardware design, control, and large-scale robot learning. He has also been actively developing open-source robotic platforms to accelerate research in embodied AI. His research has been recognized with several awards, including the Best Paper Award at ICRA 2024 and the Mike Stillman Award at Humanoids 2024. He was selected as an Innovators Under 35 Japan 2025 by MIT Technology Review.

Nikos Tsagarakis, Istituto Italiano di Tecnologia (IIT)

Title: Modular Bodies and Recovery Capabilities: Building Robots for Unstructured Environments

Abstract

Robots deployed in unstructured environments must operate under uncertainty, support interoperability across diverse tasks, and remain functional despite faults, impacts, and unpredictable conditions. This keynote will present the robot-development approach pursued by the Humanoids and Human-Centered Mechatronics Lab at IIT toward resilient and adaptable robotic systems. The central idea is that the robot body itself should not be seen only as a mechanical carrier of sensors and actuators, but as an active source of capability, robustness, and recovery. The talk will discuss modular robotic systems for construction and infrastructure-oriented tasks, including the CONCERT platform for construction applications and the MIRROR robot for space infrastructure assembly. The talk will then connect this design philosophy to recent work on hybrid legged-wheeled locomotion, briefly introducing a modular and reconfigurable manipulation platform designed to enhance loco-manipulation versatility. Beyond physical adaptability, resilient operation also requires robots to manage the practical limits of deployment, including motion energetics and actuation efficiency, degradation or faults in sensing and actuation, loss of mobility, and the need to restore functionality after critical events. The talk will briefly introduce some examples of ongoing work addressing these challenges and frame them as essential design drivers for the next generation of robotic systems.

BIO

Nikos Tsagarakis is a Tenured Senior Scientist and Principal Investigator of the Humanoid and Human-Centered Mechatronics Research Unit at the Istituto Italiano di Tecnologia (IIT). His research focuses on robot design and control, as well as the development of advanced mechatronic components, including actuation and sensing systems. He has developed several robotic platforms, including the compliant humanoids COMAN, WALK-MAN, and COMAN+, the CENTAURO hybrid wheeled-legged manipulation platform, the MIRROR in-orbit servicing proof of concept robot and the CONCERT modular and configurable mobile manipulation robot. He coordinated the EU WALK-MAN and EU CONCERT projects and has served as principal investigator on numerous European projects. He has also served on the Editorial Boards of IEEE Robotics and Automation Letters and IEEE/ASME Transactions on Mechatronics.

Yuke Zhu, Computer Science Department of UT-Austin

Title: Building Generalist Humanoid Robots

Abstract

In an era of rapid AI progress, leveraging accelerated computing and big data has unlocked new possibilities to develop generalist AI models. As AI systems like ChatGPT showcase remarkable performance in the digital realm, we are compelled to ask: Can we achieve similar breakthroughs in the physical world — to create generalist humanoid robots capable of performing everyday tasks? In this talk, I will present our data-centric research principles and approaches for building general-purpose robot autonomy in the open world. I will discuss our recent works leveraging real-world, synthetic, and web data for training robotic foundation models. By combining these advances with cutting-edge developments in humanoid robotics, I will outline a roadmap for the next generation of autonomous robots.

BIO

Yuke Zhu is an Associate Professor in the Computer Science Department of UT-Austin, where he directs the Robot Perception and Learning (RPL) Lab. He is also a Director and Distinguished Research Scientist at NVIDIA Research, where he co-leads the Generalist Embodied Agent Research (GEAR) lab. He focuses on developing intelligent algorithms for generalist robots and embodied agents to reason about and interact with the real world. He obtained his Master’s and Ph.D. degrees from Stanford University. He received the NSF CAREER Award, the IEEE RAS Early Academic Career Award, and various faculty fellowships and research awards from Amazon, JP Morgan, and Sony Research.

Thursday, 4 June

Title: Robot Learning, Planning & Foundation Models

David Hsu, National University of Singapore

Title: Scalable Robot Decision Making in the Open World: Planning and Plan Prediction with LLMs

Abstract

A hallmark of intelligence is the ability to do the right thing in myriad unfamiliar situations. The classic model-based approach to robotics draws a sharp boundary between the closed, known world and the open, unknown world: robot performance is guaranteed only in known situations. Data-driven robot foundation models, with their vast common-sense knowledge, have blurred this boundary and dramatically expanded robot capabilities in the open world. In this talk, I will argue that the long-term goal of scalable, robust robot intelligence necessitates an integration of model-based planning and data-driven plan prediction. The key issue here is the interplay, rather than the conflict, between structure and data. I will illustrate the general thinking with our work on robots aiming to operate in the open world by generating and verifying hypotheses on the fly.

BIO

David Hsu is a Provost’s Chair Professor in the Department of Computer Science, National University of Singapore and also the Director of Nvidia Research Lab in Singapore. He is an IEEE Fellow.

His research lies in the intersection of robotics and AI. In recent years, he has been working on robot planning and learning under uncertainty for human-centered robots. His work won multiple international awards, including, recently, Test of Time Award at Robotics: Science & Systems (RSS) in 2021 and IJCAI-JAIR Best Paper Prize in 2022. At NUS, he co-founded NUS Advanced Robotics Center in 2013 and founded the AI Laboratory in 2019. He has chaired or co-chaired several major international robotics conferences, including WAFR 2010, RSS 2015, ICRA 2016, and CoRL 2021. He served on the editorial boards of both International Journal of Robotics Research and IEEE Transactions on Robotics.

Stefanie Tellex, Computer Science at Brown University

Title: Towards Complex Language in Partially Observed Environments

Abstract

Robots can act as a force multiplier for people, whether a robot assisting an astronaut with a repair on the International Space station, a UAV taking flight over our cities, or an autonomous vehicle driving through our streets. Existing approaches use action-based representations that do not capture the goal-based meaning of a language expression and do not generalize to partially observed environments. The aim of my research program is to create autonomous robots that can understand complex goal-based commands and execute those commands in partially observed, dynamic environments. I will describe demonstrations of object-search in a POMDP setting with information about object locations provided by language, and mapping between English and Linear Temporal Logic, enabling a robot to understand complex natural language commands in city-scale environments. These advances represent steps towards robots that interpret complex natural language commands in partially observed environments using a decision theoretic framework.

BIO

Stefanie Tellex is a Professor of Computer Science at Brown University. Her group, the Humans To Robots Lab, creates robots that seamlessly collaborate with people to meet their needs using language, gesture, and probabilistic inference, aiming to empower every person with a collaborative robot. She has published at SIGIR, HRI, RSS, AAAI, IROS, ICAPs and ICMI, winning Best Student Paper at SIGIR and ICMI, Best Paper at RSS, and the Robocup Best Paper award at IROS. Her work has been featured in the press on National Public Radio, BBC, MIT Technology Review, Wired and Wired UK, as well as the New Yorker. Her work received the AAAI Classic Paper Award for “Understanding Natural Language Commands for Robotic Navigation and Mobile Manipulation” (AAAI 2011) recognizing it as one of the most influential papers from the Twenty-Fifth AAAI Conference.

Noémie Jaquier, KTH Royal Institute of Technology

Title: Traveling the Robot Learning Manifold: A Tale of Geometries and Inductive Biases

Abstract

Robot motions are fundamentally governed by non-Euclidean geometries. Robot state spaces are non-linear manifolds, various robotic variables exhibit distinct geometric characteristics, and data often resides in curved spaces. Despite that many problems naturally lend themselves to geometric interpretations, these underlying structures are often relegated to the background. In the modern era of data-driven robotics, this omission creates a critical gap, as many contemporary learning algorithms operate on representations that inadvertently ignore or distort the natural geometries of robotics.

This talk explores how differential geometry — arising from data structure, physics, and prior knowledge — provides a rigorous framework to construct representations and learning algorithms that respect and exploit these natural geometries. I will show that the performance of diverse algorithms is significantly enhanced by considering the intrinsic geometric characteristics of data, that complex dynamics are more elegantly learned and accurately controlled within physics-based geometric configuration spaces, and that imposing structured geometry on latent spaces allows for richer representations. Ultimately, I aim to highlight that explicitly encoding differential-geometric structures lead to improved performance, more data-efficient learning, sound guarantees, and robust generalization.

BIO

Noémie Jaquier is an assistant professor at the KTH Royal Institute of Technology, where she heads the Geometric Robot Learning (GeoRob) Lab. She received her PhD from the Ecole Polytechnique Fédérale de Lausanne (EPFL) in 2020. Prior to joining KTH, she was a postdoctoral researcher in the High Performance Humanoid Technologies Lab at the Karlsruhe Institute of Technology (KIT) and a visiting postdoctoral scholar at the Stanford Robotics Lab. Her research investigates data-efficient and theoretically-sound learning algorithms that leverage differential geometry- and physics-based inductive bias to endow robots with close-to-human learning and adaptation capabilities. Noémie is the recipient of a WASP-AI/MLX professorship and a starting grant from the Swedish research council. She received several awards, notably the Best Presentation Award at CoRL’19, Best Paper Award Finalists (IROS’23, ICRA’24), the Heidelberg Academy of Sciences Hector-Stiftung Preis 2024, and AI newcomer of Technical and Engineering Sciences 2023 by the German Federal Ministry of Education and Research.

https://njaquier.ch

Paolo Robuffo Giordano, IRISA Rennes

Title: Intrinsic Robustness: A Journey from Control-Aware Planning to Robust Robot Learning

Abstract

As robots transition from controlled labs to unpredictable environments, achieving reliable autonomy in spite of sensor noise, model inaccuracies, and disturbances remains a formidable challenge. However, successful integration of robots into our daily lives depends crucially on their ability to operate safely and reliably despite these uncertainties.

This talk explores our journey in developing computationally tractable methods for real-world robustness using “sensitivity-based” metrics. I will retrace our research progression, starting with mobile robots (UAVs) and manipulator arms. In these domains, we proposed sensitivity-aware offline and online trajectory planning able to explicitly account for model uncertainty to produce intrinsically robust motion plans. I will then discuss recent extensions to more challenging discontinuous, contact-based dynamics, such as in legged locomotion, and collaborative multi-robot missions. A key advantage of our approach lies in its computational tractability: the proposed robustness metrics are amenable for fast (or even real-time) replanning and, crucially, do not require a forced simplification of the robot/environmental model for arriving at a tractable formulation.

Finally, I will discuss our current and future activities on this research journey: moving beyond robust planning to embed these robustness metrics directly into policy learning algorithms. For instance, by integrating principled uncertainty propagation into the training phase, one can synthesize control policies with an “intrinsic robustness layer” against real-world variations, thus significantly mitigating the well-known sim-to-real gap. Our ultimate goal is to merge rigorous robustness metrics with the adaptability of modern robot learning for developing the next generation of motion generation algorithms for modern robots.

BIO

Paolo Robuffo Giordano is a CNRS Senior Research Scientist at IRISA in Rennes, France, where he directs the Rainbow Team common to IRISA and Inria Rennes. His research investigates resilient autonomous systems, focusing on robust planning, uncertainty propagation, multi-robot architectures, and shared control schemes for single and multiple robots. A core objective of his recent work is developing computationally tractable methodologies for injecting an “intrinsic robustness” layer in modern motion generation schemes, including Model Predictive Control and Reinforcement Learning, to safely deploy robots in unstructured environments. Previously, he held research positions at the German Aerospace Center (DLR) and the Max Planck Institute for Biological Cybernetics. He is a recipient of the 2019 Michel Monpetit Award from the French Academy of Sciences, a Distinguished Lecturer for the IEEE RAS Technical Committee on Multi-robot Systems, and is a Senior Editor for the IEEE Transactions on Robotics.

Thursday, 4 June

Title: Human-Robot Interaction

Julie A. Adams, Oregon State University

Title: Challenges in Adaptive Robot Teaming: Understanding Human Teammate Performance

Abstract

Human teammates conducting tasks in complex harsh environments (e.g., wildland fire response) train together and adaptively respond to each other’s performance, often based on subtle indirect cues. Future teaming with humans requires robots be as seamless at understanding their teammates, which requires robots to assess their human teammate’s performance to adapt appropriately. This talk focuses on developing robot intelligence to understand human’s inherent performance factors (e.g., workload) based on human worn sensors amenable to physically challenging in situ teaming domains. The intelligent human performance estimates support progress towards autonomous robot adaptations that can enable effective teaming for challenging, dynamic, and uncertain domains. Developing such intelligent capabilities is exceptionally challenging given the domain characteristics, human individual differences, and design needs. This talk will outline the challenges to developing true collaborative robot teammates to support their human teammates in complex harsh domains.

BIO

Julie A. Adams is the College of Engineering Dean’s Professor and the Associate Director of Research for Oregon State University’s Collaborative Robotics and Intelligent Systems Institute. She directs the Human-Machine Teaming Laboratory that develops human-machine teaming, robotics and distributed artificial intelligence capabilities for multiple and swarm aerial, ground and marine robotic systems. She focused on crewed civilian and military aircraft at Honeywell, Inc. and commercial, consumer and industrial systems at the Eastman Kodak Company. Her research is grounded in domains such as disaster response, archaeology, and oceanography. Dr. Adams is an NSF CAREER award recipient, and a Human Factors and Ergonomics Society Fellow. She has contributed to multiple national reports. She served in various IEEE Systems, Man and Cybernetics Society leadership roles, contributed to founding the ACM/IEEE International Conference on Human-Robot Interaction, and is a senior associate editor of the IEEE Transactions on Human-Machine Systems.

Marcia O’Malley, Rice University

Title: Guiding with Touch: Wearable Haptics for Shaping Human–Robot Interaction

Abstract

Despite major advances in sensing and autonomy, most robotic systems interacting with humans remain limited in how they influence human movement and learning. My work examines how wearable haptic interfaces enable physically embodied interaction, allowing robots to guide human motor behavior through touch. In this talk, I will highlight body‑conformal, multimodal wearable haptic systems that encode information about movement quality, strategy, and performance. By engaging multiple sensory pathways through modalities such as skin stretch, pressure, and vibration, these interfaces provide expressive, low-latency cues that shape motor adaptation without increasing cognitive load. Across applications including rehabilitation, surgical skill acquisition, and immersive virtual environments, real‑time haptic feedback and guidance alters movement strategies and accelerates learning. These results suggest that wearable haptics offer a compelling approach for closing the loop in human–robot interaction through direct, physically embodied control.

BIO

Marcia O’Malley is the Thomas Michael Panos Family Professor of Mechanical Engineering, Computer Science, Electrical and Computer Engineering, and Bioengineering, and Chair of the Department of Mechanical Engineering at Rice University. She received her PhD in Mechanical Engineering from Vanderbilt University. Her research focuses on haptics, physical human–robot interaction, and wearable haptics and robotic systems for rehabilitation and training. She directs the Mechatronics and Haptic Interfaces Laboratory, where her group has pioneered shared-control approaches that advance motor adaptation, skill acquisition, and recovery following neurological injury. Her work has led to over 200 peer‑reviewed publications, and her research has been supported by NSF, NIH, ONR, NASA, and industry. O’Malley is a Fellow of ASME, IEEE, AIMBE, IAMBE, and AAAS. She served as Editor-in-Chief of the IEEE ICRA Editorial Board from 2022–2024 and currently chairs the IEEE ICRA Steering Committee.

Tetsunari Inamura, Brain Science Institute at Tamagawa University

Title: Engineering Human Agency and Self-Efficacy: The Next Frontier of Human-Robot Symbiosis

Abstract

As assistive robotics transitions from laboratories to daily life, a critical paradox emerges: excessive robotic intervention often diminishes “Human Agency”—the fundamental sense of being the initiator of one’s own actions. To achieve true symbiosis, robots must do more than compensate for physical deficits; they must be engineered to foster and maintain the user’s self-efficacy. In this talk, I trace a research trajectory from physical assistance to meta-cognitive empowerment. Drawing on results from the JST Moonshot Goal 3 project, I demonstrate how integrating assistive robots with VR-induced sensory illusions can intentionally manipulate a user’s self-efficacy. By decoupling perceived effort from actual performance, we show that robots can prime the human mind for greater autonomy.

However, true empowerment requires human growth rather than temporary sensory manipulation. Under our JST CREST initiative, I propose the “Self-Mirroring Twins” (SeMT) framework. SeMT is not merely a tool for correcting cognitive errors; it functions as a “meta-cognitive mirror” designed to cultivate the user’s ability for objective self-observation. Through interaction with SeMT, users are prompted to reflect on their own actions and initiate intentional behavior changes to achieve ideal performance. This process enables the acquisition of robust self-efficacy rooted in actual skill improvement. The ultimate goal of this “Engineering of Agency” is to redefine robots as partners that help humans recognize and expand their own boundaries. We aim for a future where robotic evolution ensures that humans become more empowered and capable, rather than more dependent.

BIO

Tetsunari Inamura is a Professor at the Graduate School of Brain Sciences and Director of the Advanced Intelligence & Robotics Research Center within the Brain Science Institute at Tamagawa University. His research pioneers the intersection of robotics and cognitive science, focusing on symbol emergence in humanoid robots, VR-mediated self-efficacy enhancement, and cyber-physical systems for human-robot interaction. Currently, he is the Principal Investigator of the JST CREST “Self-Mirroring Twins” project and a Co-PI for the JST Moonshot Goal 3 and 9 programs. A Fellow and Vice President of the Robotics Society of Japan (RSJ), he holds prominent international leadership roles, including Senior Editor for Advanced Robotics, Co-chair of the IEEE RAS Technical Committee on Cognitive Robotics, and Vice-Associate President of the IEEE RAS Conference Activities Board. His work is dedicated to redefining human-robot symbiosis through the engineering of agency and meta-cognitive empowerment.

Berk Calli, Worcester Polytechnic Institute (WPI)

Title: Overcoming Manipulation Challenges in Environmental Robotics through AI-based Solutions and Human-Robot Partnership

Abstract

Robots have the potential to play a critical role in addressing pressing environmental and climate challenges by enabling new solutions and scaling existing ones. However, most environmental robotics applications require operation in highly unstructured and demanding conditions, where variability, uncertainty, and complexity pose significant challenges. These challenges are significantly amplified in applications requiring robotic object manipulation. At the same time, the urgency of these problems calls for deployable solutions in the near term, rather than relying solely on long-term visions of full autonomy. This talk presents a perspective on developing practical robotic systems for environmental applications through a combination of robotic manipulation techniques, efficiently generating AI models, and leveraging human–robot partnership. Focusing on robotic shipbreaking and waste sorting, I will discuss how real-world challenges can be translated into concrete research questions in perception, learning, and manipulation. In particular, I will present approaches for scalable dataset generation through automatic labeling, vision-based control strategies for handling uncertainty, and ensemble learning-based grasping methods to improve robustness and adaptability. These examples illustrate how tightly integrating real-world deployment with methodological advances can accelerate the use of robotics in addressing environmental problems.

BIO

Berk Çallı is an Associate Professor in the Robotics Engineering Department at Worcester Polytechnic Institute (WPI), where he leads the Manipulation and Environmental Robotics (MER) Laboratory. His research focuses on addressing environmental challenges through robotics as well as fundamental problems in robotic manipulation. His work spans robotic grasping, in-hand manipulation, soft robot control, robot learning, and active vision strategies. He received the U.S. National Science Foundation CAREER Award in 2024. He is a lead organizer of the Climate Robotics Summit, the COMPARE community ecosystem, and the ICRA Robotic Grasping and Manipulation Competition. Prior to joining WPI, he was a postdoctoral researcher at the Yale University GRAB Lab. He received his PhD from Delft University of Technology in the Netherlands and his MS and BS degrees in Mechatronics Engineering from Sabancı University, Turkey.